When teams run experiments—A/B tests on a website, marketing campaigns, product feature rollouts, or clinical-style interventions—they want a clear answer: did the change make a real difference, or did the result happen by chance? Power analysis is the planning step that helps you design experiments with enough data to detect an effect of a meaningful size. It prevents a common mistake: running an experiment that is too small to be conclusive, then making decisions based on noisy outcomes. If you are learning experimentation through a data science course, power analysis becomes one of the most practical statistical tools because it links business constraints (time, cost, traffic) to scientific confidence.

For learners enrolled in a data scientist course in Pune, power analysis is also a bridge between theory and execution. It turns probability concepts into concrete decisions like “How many users do we need?” or “How long should the test run?”

What Power Analysis Actually Answers

Power analysis helps you figure out the smallest sample size needed to detect an effect of a certain size, given specific error limits. Simply put, it answers: “How much data do I need so that if there is a real effect, my experiment is likely to find it?”

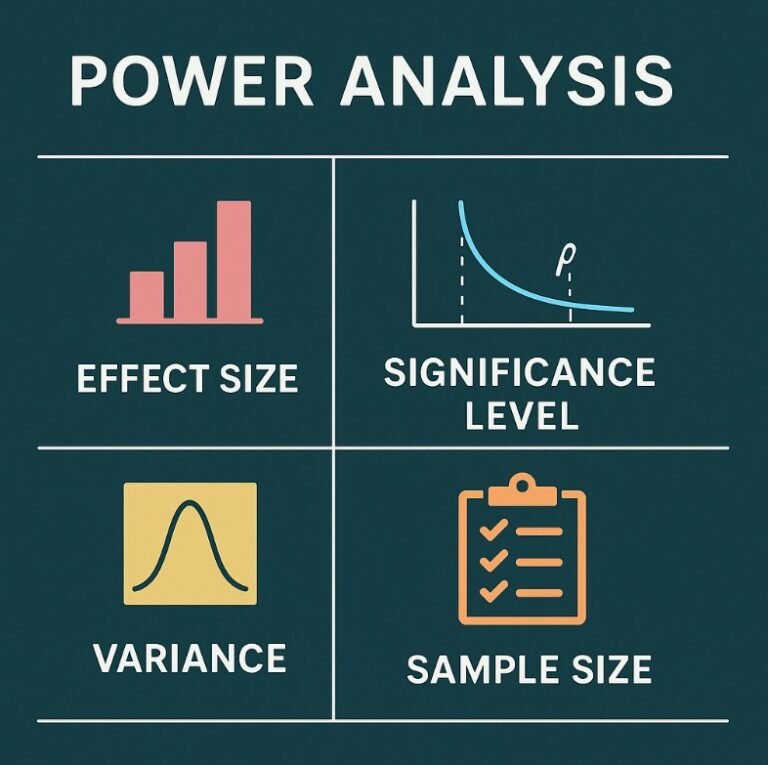

To understand this, it helps to define four key elements:

- Effect size: The smallest difference you care about detecting (for example, a 2% improvement in conversion rate).

- Significance level (alpha): This is the probability of mistakenly concluding that there is an effect when there isn’t one, referred to as a false positive. The standard value often applied is 0.05.

- Power (1 – beta): The probability of detecting a real effect of the specified size. Common targets are 80% or 90%.

- Variability: How noisy the metric is. More variability usually requires larger samples.

Power analysis does not guarantee success. It ensures your design is not underpowered, meaning you are not running an experiment that is unlikely to detect the effect even if it is real.

Why Underpowered Experiments Are Risky

Many experiments fail not because there is no effect, but because the sample size is too small. This creates two problems.

First, you might miss a real improvement and discard a good change. That is a false negative, and it can slow down progress because promising ideas get rejected prematurely.

Second, small samples can produce unstable results. An early “win” may flip to a “loss” as more data arrives. If you stop too early, you might launch a change based on random variation. This is one reason experienced practitioners emphasise power analysis in a data science course curriculum—because it reduces wasted testing cycles and improves decision quality.

The Core Inputs: Effect Size, Baseline, and Minimum Detectable Effect

In most business experiments, the hardest part is choosing the effect size. The effect size should not be “any difference at all.” It should reflect what is worth acting on. For example, if a 0.2% lift in conversion rate will not cover implementation costs, then detecting it is not necessary. Instead, you define a minimum detectable effect (MDE)—the smallest lift that justifies a decision.

You also need a baseline rate or baseline mean. For conversion tests, this is your current conversion rate. For continuous metrics (like revenue per user), it is the current average and standard deviation.

A practical way to think about it:

- Smaller MDE → larger sample size needed

- Higher required power → larger sample size needed

- Lower alpha (stricter false-positive control) → larger sample size needed

- Higher noise/variance → larger sample size needed

This trade-off thinking is an important skill for learners taking a data scientist course in Pune, because real projects always have constraints like limited traffic, budget, or time.

Common Types of Power Analysis in Practice

Power analysis depends on the statistical test and metric type. The most common situations include:

Proportion Metrics (A/B Tests)

If you are testing conversion rate, signup rate, click-through rate, or churn rate, you are dealing with proportions. Power analysis here typically uses formulas based on the expected difference between two proportions and the variability implied by those proportions.

Continuous Metrics

If your metric is average order value, session duration, or revenue per user, you use power analysis based on averages and standard deviations. The important part is to estimate variability accurately, using either past data or a small test run.

Multiple Groups or Multiple Metrics

When testing more than two variants (A/B/C) or monitoring several primary metrics, sample size requirements can increase due to the need to correct for multiple comparisons. Teams often handle this by defining one primary metric and treating others as secondary.

Practical Guidance and Common Mistakes

Power analysis helps most when it is used as a planning tool, not as a box-checking step. A sensible workflow looks like this:

- Define the decision: what action will you take if the effect is detected?

- Choose a primary metric and baseline value.

- Set alpha and target power.

- Define MDE based on business impact.

- Run the sample size calculation and estimate test duration.

- Re-check assumptions once you see early variance estimates (without peeking at significance).

Common mistakes include picking an unrealistically large effect size just to reduce sample size, ignoring variance, stopping the experiment the moment the p-value dips below 0.05, and changing the metric mid-test. Another frequent issue is not accounting for seasonality or traffic changes, which can violate assumptions and reduce effective power.

Conclusion

Power analysis is the statistical planning step that protects experiments from being inconclusive or misleading. By connecting effect size, acceptable error rates, and data variability to a minimum sample size, it helps teams run tests that can actually answer the question they care about. In a data science course, power analysis is a key concept because it supports reliable experimentation and better business decisions. For learners building practical skills through a data scientist course in Pune, mastering power analysis will help you design experiments that are efficient, interpretable, and truly decision-ready.

Business Name: ExcelR – Data Science, Data Analyst Course Training

Address: 1st Floor, East Court Phoenix Market City, F-02, Clover Park, Viman Nagar, Pune, Maharashtra 411014

Phone Number: 096997 53213

Email Id: enquiry@excelr.com